How to Detect AWS S3 Bucket Misconfiguration using Open-Source tools

Written By: Sai Adithya Thatipalli

Ever since the emergence of cloud computing, Cloud Services and their offerings have been increased exponentially overtime. Tech giants like Microsoft, AWS, and Google have provided the platform to build applications and services using their Cloud Infrastructure. Microsoft and AWS are competing with each other to become the market leader by providing number of services from IAAS, PAAS and SAAS to server less architecture etc.

Among the wide range of services provided by AWS, One of the major services used is S3 (Simple storage service).

What is AWS S3 and its purpose?

S3 stands for simple storage service. A flexible, scalable service offered by AWS to host the data for short term and long term goals.

S3 buckets can act as a back-end environment for hosting static websites and database just to store the media for applications to access it from cloud to avoid Infrastructure expenses. With increasing demand, AWS also started expanding their services horizontally and vertically scalable. Due to this S3 became part of the application ecosystem and also visible attack surface.

What are AWS S3 Bucket Misconfigurations?

S3 bucket creation process includes configuring bucket policies, IAM access, disclosing data to public, secure traffic, Transfer of data in case of DRS. If we do not give attention and overlook critical configuration, this might lead to misconfiguration and also a target for attackers to exploit and access the sensitive information.

According to statistics by security firm Sky-high Networks, , 7% of all S3 buckets have unrestricted public access, and 35% are unencrypted .

By default when we create a S3 bucket, AWS create it as a private with a default admin role assigned to it with full access. Along with that it will create a narrow Access Control Lists (ACL) in a way that very limited users have access to the bucket.

Configuring S3 bucket securely consists of 3 major categories.

- IAM roles creation and Assignment

- Public and Private availability with Read / Write access in Bucket policies

- Access Control lists

Once the bucket is created, Admin should create a robust policy by creating users using IAM and assigning the roles with a custom level of access instead of ‘Full Access’. Read / Write access can be narrowed down to directory, sub directory level since there might be sensitive or configuration files which are part of the bucket. During creating Bucket policies, IAM or root users should ensure that only required information should be allowed to public.

S3 buckets should be protected 360 degrees using the ACL’s. Admin should allow only the authorized traffic to access the data in S3 bucket. Any negligence or mishandling can lead to misconfiguration of S3 bucket and also leads to become a attack surface to exploit and access the sensitive data.

Below are some of the misconfigurations.

- Disabling of Access logs

When we configure the bucket, it’s important for us to enable the logs to monitor the unauthorized modifications

- Allowing S3 bucket to Public

Making S3 bucket public can cause information leakage as Attackers are constantly doing recon to identify open S3 buckets.

- Not enforcing MFA to S3 Buckets.

Having an additional layer of authentication can prevents the attacks to a major level

- Full Read/Write access to Unauthorized users

Configuring Read / Write access plays a major role in security S3 buckets

- Disabling the Data Encryption

There is a common misconception that service providers will make sure the traffic is secure, which makes the data secure. Using TLS or SSL can only make the traffic secure but the data will not the safe until we enable the data encryption

- Not configuring the S3 Bucket transfer acceleration

While planning the disaster recovery plan, Infra teams should make sure to use the AWS feature of transfer acceleration which helps to transfer the data to another location swiftly.

How to Identify AWS misconfigured S3 buckets

Many security researchers as part of their bug hunting and security research have created many custom scripts to enumerate, scan and identify publicly accessible and reachable s3 buckets.

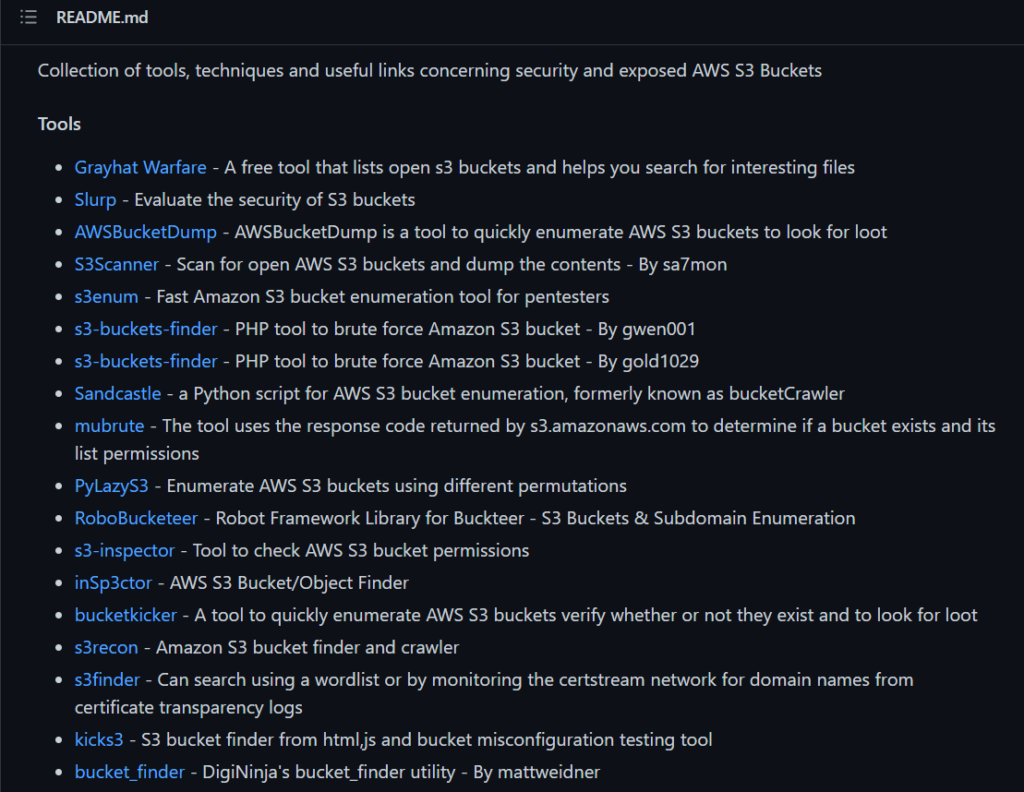

Awesome – sec- s3

URL: https://github.com/mxm0z/awesome-sec-s3

This is a repo contains many tools relating to enumerating, scanning and investigating misconfigured S3 buckets.

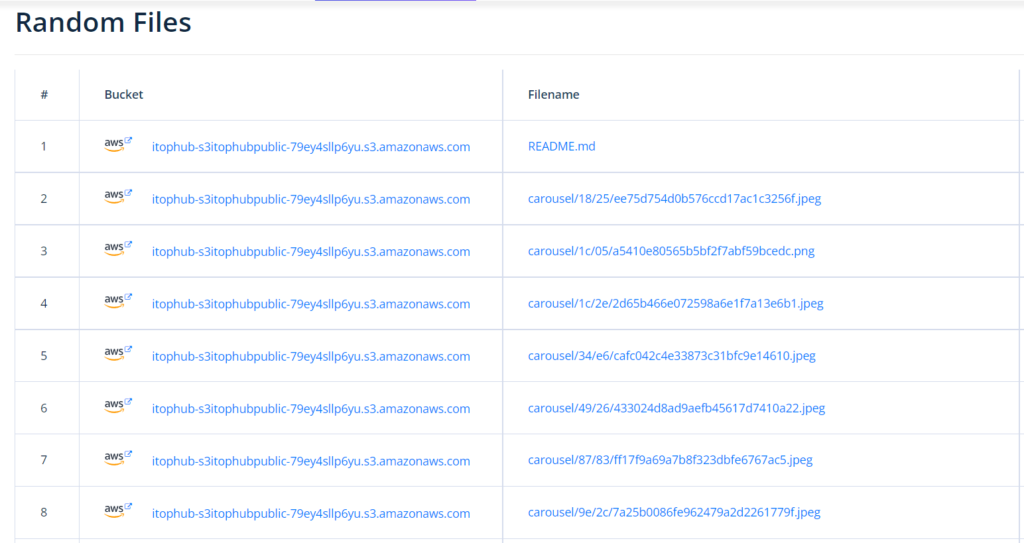

Grayhat Warfare is shodan of S3 Buckets. It contains huge repo of public buckets and updated regularly. But since it’s a premium version, we cannot able to access all the features unless we pay for it.

In the free version, we can use an option available in the website “Random Files” which can show us some of the files randomly which are available in this website’s repo

Lazys3

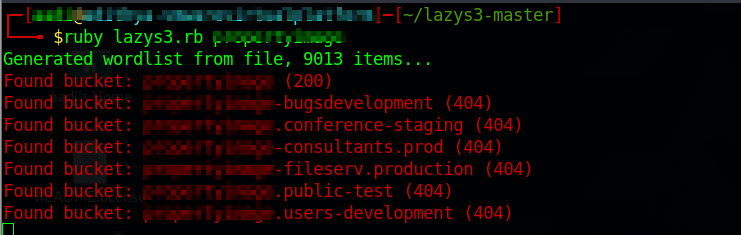

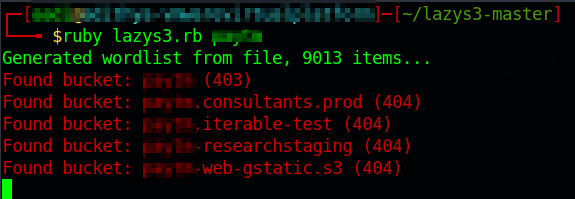

LazyS3 is a ruby script developed by famous Security researcher, H1 content head NahamSec. It is available in his github repo along with the usage. It will search the input which we gave along with the wordlist and give the result along with the status code.

The following are screenshots of the script(The name of the company is hidden for privacy concerns).

In the above images you can see that we have provided a company name and executed the script. This script uses a list of common prefixes used in bucket naming conventions.

If status code is 200, then the bucket is accessible

If code is 403, bucket is available but access restricted

If code is 404, bucket not found

There are many other scripts which uses Endpoint URL’s, API, and Region location scanning to identify misconfigured S3 buckets.

How to mitigate Misconfigurations

AWS administrations should follow the best practices while creating and managing buckets. The steps for securing buckets and the information within them are outlined in security controls and best practices.

- The vulnerability scanner integrates misconfiguration signatures with enterprise vulnerability scanning capabilities. These scanners provide comprehensive reports with severity rates, remediation steps etc. Restricting access to limited users and creating IAM policies to avoid unauthorized access.

To prevent information leakage, S3 buckets can be encrypted.